Load Balancing and Failover for High-Volume Transactions

Introduction: Scaling Beyond the “Single Pipe”

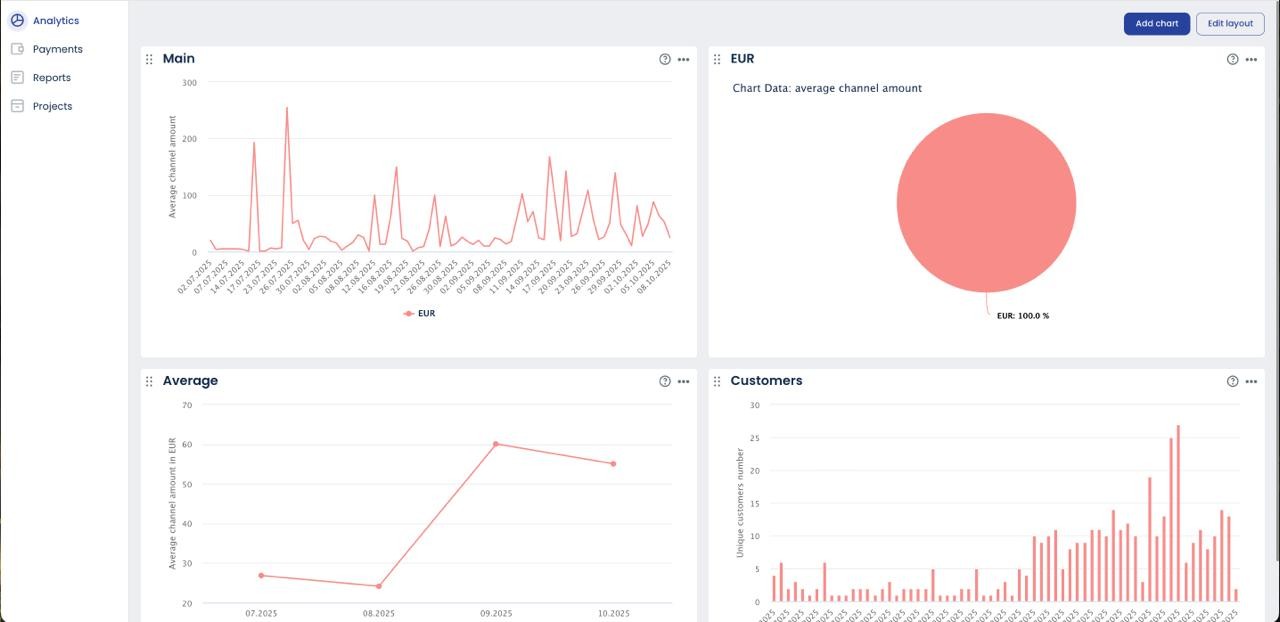

We are accustomed to horizontally scaling application tiers by simply adding instances, but that model breaks when applied to payment processing. The primary bottleneck is not your CPU or network; it is the hard constraints imposed by your acquiring partners. Every acquirer has TPS limits and monthly volume caps that create a ceiling on your growth. For high-volume merchants, a single processing relationship is not a strategy—it is a single point of failure. The solution is to apply infrastructure principles to financial routing. True payment gateway load balancing is not about distributing server load; it is about programmatically distributing financial volume and risk across multiple Merchant IDs (MIDs). This is the core discipline required to build a system for high availability payments, ensuring 99.999% uptime regardless of any single provider’s status. This architecture is the logical next step beyond a basic BCP, as discussed in our brief on Business Continuity for Payments.

The Business Case: Why Load Balance MIDs?

For high-volume operations, payment gateway load balancing is not a technical optimization; it is a financial and operational necessity driven by the rigid constraints of the acquiring world. The business case is built on two primary factors that directly impact revenue and viability.

First is the management of merchant ID volume caps. Acquirers, particularly in high-risk verticals, impose hard monthly processing limits on individual MIDs. A single MID capped at €500k per month creates an absolute ceiling on your growth. A load balancing strategy that distributes traffic across three such MIDs immediately triples your processing capacity to €1.5M, transforming an operational roadblock into a scalable architecture.

Second, and more critically, is risk dilution. Card schemes like Visa and Mastercard enforce strict chargeback ratio thresholds (typically below 1%). Processing €1M in volume with €11k in chargebacks on a single MID results in a 1.1% ratio, triggering fines and potential account termination. By distributing that same volume and risk across four MIDs (€250k each), the chargeback ratio for each individual account is managed down to a compliant level. This is not about evading responsibility; it is a required risk management strategy to maintain good standing across your entire acquiring portfolio.

Routing Algorithms: From Simple to Smart

Not all distribution logic is created equal. The sophistication of your routing algorithm directly determines the financial efficiency and approval rate of your entire payment stack. Simply distributing traffic evenly is a primitive approach that leaves significant money on the table.

- Round Robin: This is the most basic algorithm, sending transactions to each available provider in a simple, sequential loop. While it achieves distribution, it is “dumb” logic, completely blind to performance or cost. It treats a high-performing, low-cost Tier-1 acquirer and a high-cost, backup acquirer as equals, which is operationally inefficient.

- Weighted/Priority Routing: This is the next logical step, allowing you to assign a weight to each provider. For example, 80% of volume is directed to your primary, low-cost acquirer, while 20% goes to a secondary provider with higher approval rates but higher fees. This is a cost-optimization strategy.

- Parameter-Based (Smart) Routing: This is the gold standard. Here, the routing decision is made dynamically based on the transaction’s metadata. The most effective form of this is BIN routing. The Bank Identification Number (BIN) on a card reveals its issuing country and bank. Smart routing logic uses this data to direct a German card to your German acquirer or a US card to your US acquirer. This practice of local processing dramatically increases approval rates, as cross-border transactions are often viewed with higher suspicion by issuing banks.

Failover Logic: Implementing the Circuit Breaker

Robust payment gateway load balancing is not just about distributing volume during normal operation; it is about intelligently managing failure. The most resilient architecture for handling provider downtime borrows the circuit breaker pattern from microservices. This is an automated, stateful mechanism that prevents your system from repeatedly calling a failing service.

It is crucial to differentiate between a transaction decline and a service failure. A soft decline like “Insufficient Funds” is a valid response and should not trigger a failover. A hard failure—a 503 Service Unavailable error, a socket timeout, or a DNS resolution failure—is a signal that the provider is unstable.

The logic is a three-stage state machine:

- Closed: The circuit is closed, and traffic flows normally. The system monitors for hard failures. If the failure rate exceeds a configured threshold (e.g., more than five timeouts in ten seconds), the circuit “trips.”

- Open: The circuit is now open. For a set cool-down period (e.g., five minutes), 100% of traffic is immediately routed to the secondary provider. No calls are made to the failing primary, giving it time to recover.

- Half-Open: After the timeout, the circuit allows a single “probe” transaction through to the primary. If it succeeds, the circuit closes and normal routing resumes. If it fails, the cool-down period restarts.

This is conceptually similar to how health checks work in systems like AWS Elastic Load Balancing, providing an automated, self-healing transaction failover capability.

The ‘Sticky Session’ Problem in Payments

Unlike stateless web traffic, payment requests are part of a stateful transaction lifecycle. A standard load balancer cannot manage this because subsequent actions like captures, voids, and refunds are not independent events; they are children of the original authorization. This creates the “sticky session” problem: if an authorization is routed to Acquirer A, the corresponding capture or refund request must be routed to Acquirer A. Attempting to refund a transaction through Acquirer B that was authorized by Acquirer A will fail.

Solving this requires a change at the application layer. Standard payment gateway load balancing can be used for the initial authorization, but upon a successful response, your system must persist the acquirer_id or gateway_id alongside the transaction_id in your database. All subsequent lifecycle API calls for that transaction must then bypass the load balancing logic and be routed directly to the specific gateway that holds the original authorization. This ensures transactional integrity across a multi-acquirer environment.

Conclusion: Architecture is the Ultimate Reliability

Ultimately, high availability in payment processing is not a feature you inherit from a provider; it is a capability you design into your own stack. Relying on a single acquirer’s uptime is an architectural decision to accept failure. The strategic choice is not if you need this reliability layer, but whether you will dedicate significant and ongoing engineering resources to building and maintaining this complex load balancing and failover logic in-house. A mature payment orchestration platform treats this as a solved problem, abstracting away the low-level complexity. This allows your team to focus on core product innovation rather than financial infrastructure plumbing. Sola’s built-in orchestration engine delivers these cascading and smart routing capabilities out-of-the-box. For a review of foundational principles, see our secure integration guide.